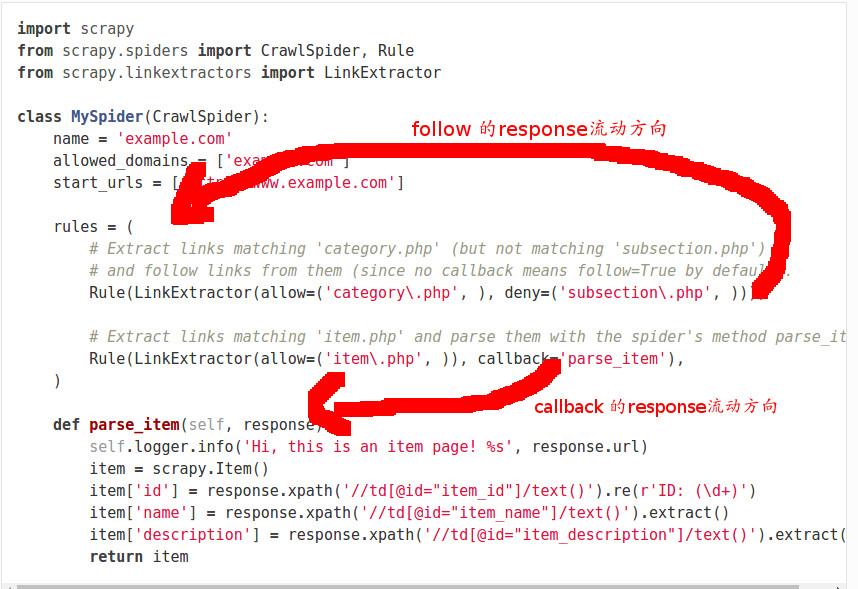

This could be the web crawling task and the web scraping task could be to collect titles and prices of the books from each dedicated book page. So, you can instruct the crawler to go into all the different book pages to find all the secondary categories and then collect all the pages that are category pages. In this case, it would be quite trivial because we have a sidebar where all the categories are listed but in a real-world project, you will oftentimes have something like maybe the top 10 categories that you can click and then there are a hundred more categories that you have to find by for example going into a book and then you have another 10 sub-categories of the book present on that book page. That would be the mission of our web crawler. The web crawler would then follow to find all the available links with /catalogue/category patterns in them. So, one task would be to instruct our web crawler to find all the links that have this pattern. In the next section, we are going to create a web crawler using Scrapy which will help us eliminate these limitations. Basically, BFS looks for the shortest path to reach the destination. If you want to learn more about BFS and DFS then read this guide. This depends on the structure of the website and the goals of the crawling operation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed